TL; DR: AI support succeeds when it’s treated as an operational system, not a plug‑and‑play tool. Clear ownership, clean support data, controlled automation, and seamless human handoffs determine whether AI reduces effort or creates friction. Teams that fix these fundamentals turn AI into a lasting support advantage.

AI in customer support rarely fails overnight; it breaks down through small, avoidable challenges that compound over time.

These AI support implementation mistakes often show up as inaccurate responses, broken handoffs, and frustrated customers, even when the technology itself is sound.

The risk is higher than many teams expect. Gartner reports that only 28% of AI initiatives meet ROI expectations, with most falling short due to gaps in strategy, data quality, and execution.

Understanding where AI implementations go wrong, and how to fix them is the difference between scalable support and costly automation failures.

Let’s break down the most common AI support implementation mistakes and how you can avoid them to build a more effective support system.

Quick comparison of AI implementation mistakes

This comparison table highlights the most common AI implementation challenges and their real‑world impact.

It outlines how each issue affects customer support outcomes and the practical steps teams can take to address them effectively.

| Mistake | Impact on support | How to fix |

| Unclear strategy | No measurable ROI | Connect AI goals to CSAT, AHT, and SLAs |

| Poor support data | Wrong responses, escalations | Clean, unify support data |

| Unrealistic expectations | Over‑automation | Start simple, expand gradually |

| Over‑reliance on AI | Workflow friction | Keep agents in the loop |

| No agent training | Low adoption | Train support teams + communicate |

| No monitoring | AI performance drift | Track metrics, optimize |

| Weak handoffs | Customer frustration | Pass full context to agents |

7 AI support implementation mistakes and how to fix them

AI doesn’t fail; your workflows do. While AI can significantly enhance customer support efficiency, many companies hastily implement AI initiatives and subsequently experience underwhelming results.

In most cases, AI-powered customer support success depends more on implementation than on the technology itself.

According to Gartner, 91% of service and support leaders feel pressure from executives to adopt AI, which often leads to rushed decisions and gaps in strategy, data, and adoption.

Below are the most common implantation mistakes companies make when rolling out AI in customer support, and how to fix them.

Mistake #1: Unclear strategic direction and misalignment with business goals

Many organizations adopt AI customer service because it’s trending, rather than to solve a clearly defined support challenge.

When AI is implemented without specific, measurable objectives, such as reducing response times, improving CSAT, or lowering ticket volume, it lacks direction and often fails to deliver meaningful results.

Why this mistake happens

- Pressure to “use AI” without a clear support strategy

- Tool‑driven decisions instead of outcome‑driven planning

- No shared definition of customer success across teams

How to fix it

- Define clear business and support goals before selecting AI support tools

- Align AI in customer service use cases with measurable outcomes like CSAT, SLAs, or cost per ticket

- Treat AI as a capability that supports strategy, not the strategy itself

Mistake #2: Poor data quality or insufficient training data

AI systems are only as effective as the data they rely on. When support data, such as ticket history, chat transcripts, call logs, and knowledge base content, is incomplete, outdated, or fragmented across systems, AI delivers unreliable and inconsistent responses.

This directly results in failed self‑service resolution, increased ticket escalations, longer handle times, and minimal improvement in core support metrics like CSAT and cost per ticket.

Even advanced AI tools struggle to drive meaningful outcomes without clean, structured, and accessible support data.

Why this mistake happens

- Customer and support data scattered across multiple tools and platforms

- Incomplete or outdated ticket history, customer records, or knowledge base content

- Limited time and resources allocated to data cleaning and preparation

- Weak data ownership and governance practices

How to fix it

- Audit existing support data sources (ticketing systems, chat logs, call transcripts, and knowledge bases) to assess completeness, accuracy, and coverage of top customer issues

- Define a support data integration plan that ensures the AI platform can access unified ticket history, customer context, and relevant content

- Centralize or unify key support data sources so AI systems operate from a consistent view of customer service problems and proven resolutions

- Establish governance for support data and content, including clear ticket ownership and labeling standards, to prevent AI performance degradation over time

Mistake #3: Unrealistic expectations for AI customer service outcomes

AI can improve efficiency and scale support, but it is not designed to replace human decision‑making.

Expecting AI in customer support to immediately resolve every issue or deliver smooth experiences from the start often results in over‑automation, poor customer outcomes, and internal friction.

Why this mistake happens

- AI is seen as a shortcut to replacing agents

- Expectations are driven by hype rather than reality

- Success metrics are unclear or short‑term

How to fix it

- Define clear boundaries for AI in support workflows by specifying which requests AI should handle end‑to‑end (FAQs, order status, password resets) and which should always involve a human agent

- Start with simple, high‑volume support use cases such as ticket categorization, response suggestions, or knowledge surfacing before expanding to more complex interactions

- Measure success using support‑specific metrics like deflection rate, average handle time, CSAT, and escalation volume, rather than broad AI performance claims

Mistake #4: Over-reliance on AI technology

Many AI initiatives in customer support fall short due to unrealistic expectations that the technology can operate independently.

Introducing AI without adapting agent workflows, escalation rules, and ownership often creates friction instead of efficiency.

This typically shows up through unclear handoffs and disruptions to existing ticket workflows, limiting the impact on resolution times, escalations, and customer satisfaction.

Why this mistake happens

- Assumption that AI can independently resolve support issues without agent involvement

- Limited agent training on how to review, edit, or override AI‑generated responses

- Support workflows designed for manual triage, escalation, and resolution

- No clear ownership for monitoring AI performance in live support queues

How to fix it

- Map support workflows end‑to‑end to define where AI assists (ticket classification, response suggestions, summarization) and where agents must take control

- Train support agents on how to use, validate, and refine AI suggestions during real customer interactions

- Keep agents in the loop by making AI a decision‑support tool, not a replacement, especially for billing issues, cancellations, complaints, and edge cases

Mistake #5: Ignoring support agents training

Even well‑designed AI support systems can fail if support teams aren’t prepared to use them.

When agents don’t understand how AI fits into their workflows, or don’t trust its outputs, adoption delays and performance worsen.

Implementing AI without adequate agent training and communication often results in inconsistent usage, manual workarounds, and resistance from the teams it is intended to support.

Why this mistake happens

- AI is introduced as a technical upgrade, not a workflow change

- Support agents are not trained on when or how to work with AI

- Internal communication focuses on tools instead of impact

How to fix it

- Train support agents on AI‑assisted workflows and decision points

- Position AI as a support tool, not a replacement

- Reinforce adoption with clear guidance and feedback loops

Mistake #6: Not monitoring or optimizing performance

Launching AI support without ongoing monitoring often leads to declining response quality and missed opportunities for improvement.

As customer behavior, products, and language evolve, AI performance can quickly drift if left unmanaged.

Without regular optimization, AI that initially performs well may become inaccurate or ineffective over time.

Why this mistake happens

- AI is treated as a one‑time deployment

- Performance tracking is limited or not prioritized

- Feedback loops are missing or unclear

How to fix it

- Track AI performance using defined metrics

- Review failed customer interactions and escalation patterns regularly

- Continuously refine workflows and knowledge sources

Example: Initially, AI reduces ticket volume by handling common questions effectively. Months later, customer language and product features change, but AI behavior isn’t monitored or optimized. Incorrect responses become common, and agents stop trusting AI suggestions entirely.

Impact: AI adoption drops internally, and the organization quietly reverts to manual processes.

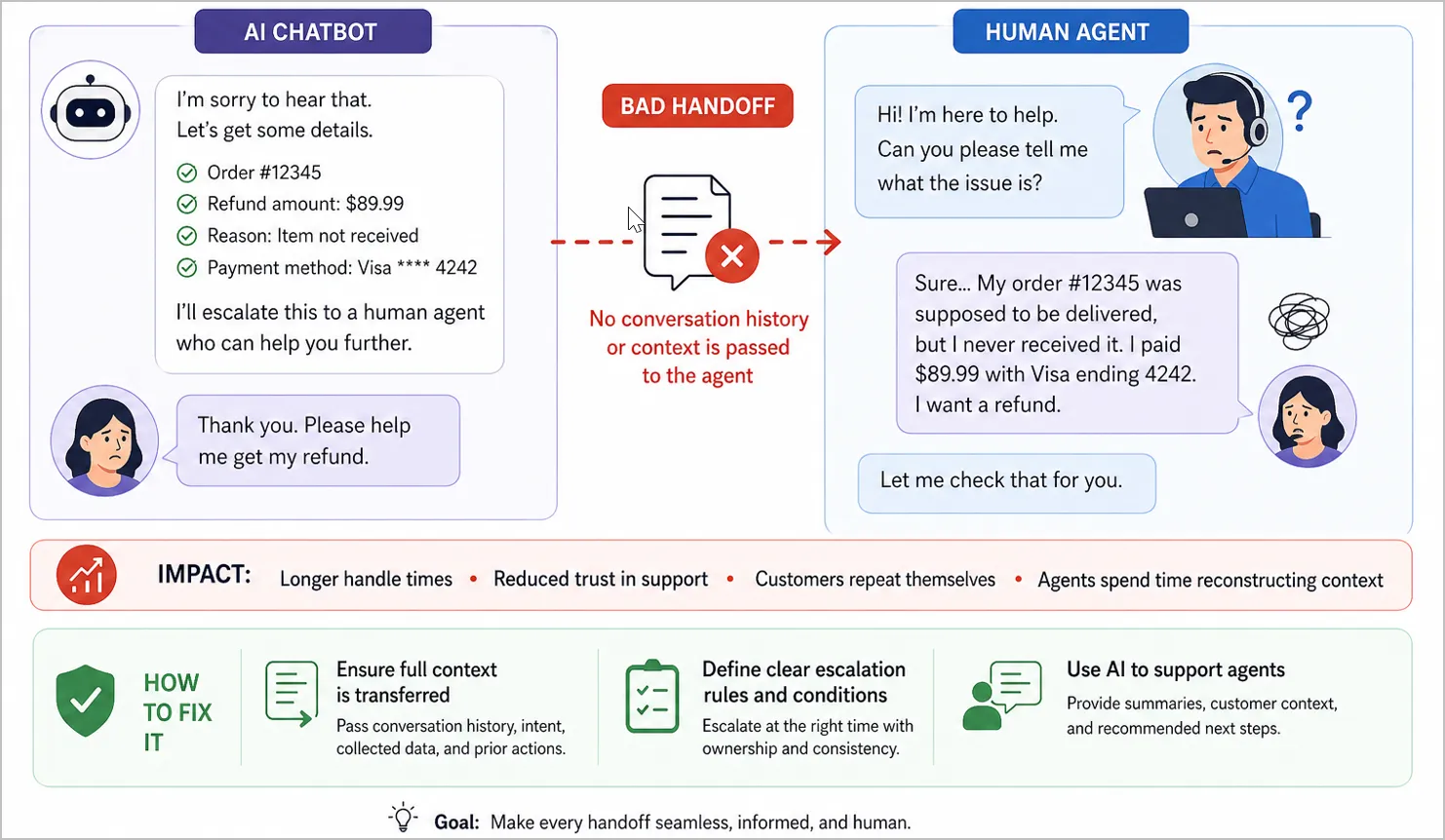

Mistake #7: Creating a bad AI-to-human handoff

When AI escalates an issue without transferring the full context, customers are forced to repeat themselves, and agents lose valuable time reconstructing the conversation.

This breaks customer engagement continuity and quickly leads to frustration.

A weak handoff can undo the benefits of automation workflow by making interactions feel disorganized and impersonal.

Why this mistake happens

- Escalation workflows lack clear design and ownership

- AI interactions are not linked to agent workflows

- Context, intent, or prior actions are not passed during handoff

How to fix it

- Ensure full context is transferred during escalation

- Define clear escalation rules and handoff conditions

- Use AI to support agents with summaries and next steps

Example: An AI support tool collects detailed information about a failed refund but escalates the ticket without transferring the conversation history to the support agent. The agent asks the customer to repeat everything from scratch, adding frustration to an already sensitive issue.

Impact: Longer handle times, reduced trust in support, and agents spending time repairing workflows instead of resolving issues.

Avoiding these AI implementation challenges requires more than awareness; it requires the right tools and guardrails to operationalize AI responsibly.

How BoldDesk helps you avoid these AI customer support implementation mistakes

Every failed AI interaction doesn’t just slow down support; it erodes customer connection, increases the risk of churn, and drives up support operational costs.

Inaccurate responses, unnecessary escalations, and broken conversations quickly turn automation into frustration.

BoldDesk helps teams run AI the right way, combining automation with control, visibility, and agent support.

The result: AI that strengthens customer support instead of getting in the way.

Here is how the BoldDesk helps prevent common AI support mistakes.

- Reduce escalations with AI that supports, not replaces, agents: BoldDesk AI Copilot provides agents with accurate response suggestions, conversation summaries, and full customer context during active support interactions. This helps agents respond faster and more confidently.

- Detect and fix AI failures before customers feel the impact: Built‑in analytics provide visibility into AI accuracy, escalation trends, and response quality across support workflows. Support teams can identify where AI struggles and make targeted improvements early.

- Deliver consistent, accurate answers at scale: BoldDesk uses a centralized, structured knowledge base software to keep AI responses aligned with the latest product updates, policies, and support content. This prevents outdated or conflicting information across channels as support volume grows.

- Eliminate data silos that weaken AI accuracy: BoldDesk integrates with CRM and business systems to give AI access to complete customer context across tickets, conversations, and history. This allows AI to classify intent and route issues more accurately.

- Ensure seamless AI‑to‑human handoffs without repetition: When AI escalates a conversation, BoldDesk transfers full conversation history, detected intent, and suggested next steps to the agent. Customers never have to repeat themselves, and agents can act immediately.

Turn AI customer support implementation challenges into success

AI elevates customer support only when implemented with intention.

By avoiding common mistakes, teams deliver faster resolutions, maintain seamless customer journeys, and improve CSAT, without sacrificing the customer experience.

Looking to scale support with AI without hidden costs or churn risk? BoldDesk combines automation and AI assistance to help you deliver reliable support outcomes from day one.

BoldDesk offers full feature access across all plans with free AI credits and transparent pricing plans plus optional AI Agent and AI Copilot add‑ons to boost support efficiency.

Start a free trial to see BoldDesk in action or book a live demo to explore how AI‑powered support can work for your team.

Have questions or insights to share? Drop them in the comments. We’d love to hear from you.

Related articles

- 10 Common Customer Service Mistakes to Avoid and How to Fix Them

- Top Customer Service Guidelines to Improve Customer Experience

- 8 Practical Ways to Use Generative AI in Customer Service

Frequently Asked Questions

AI support implementation mistakes are common errors organizations make when deploying AI for customer or internal support that reduce effectiveness, ROI, or user trust.

BoldDesk enables AI-driven support through features like automated ticket handling, response suggestions, and smart knowledge base recommendations to improve speed and efficiency.

It also ensures a balanced approach by letting teams review and control AI outputs, maintaining accuracy, transparency, and a human touch.

The key customer service metrics to track include CSAT, response time, containment rate, and escalation quality.

Businesses can avoid AI implementation failures by setting clear goals, using high-quality data, maintaining human oversight, and continuously monitoring AI performance.

With the right strategy, data readiness, and human oversight, great AI in customer support can be implemented correctly in 8–12 weeks, without costly mistakes or compromising the customer experience.